Meteorology and Weather Alteration

█ AGNES GALAMBOSI

Up to 40 percent of the estimated $10 trillion U.S. economy is affected by weather and climate each year. The National Aeronautics and Space Administration (NASA) and the National Oceanic and Atmospheric Administration (NOAA) are the two U.S. agencies with primary responsibility for developing technology related to earth observation (e.g., the design and operation of weather satellites) and meteorological monitoring.

Meteorology is a science that studies the processes and phenomena of the atmosphere. Meteorology consists of many areas: physical meteorology, dealing with physical aspects of the atmosphere such as rain or cloud formation; synoptic meteorology, the analysis and forecast of large-scale weather systems; dynamic meteorology, which is based on the laws of theoretical physics; climatology, the study of the climate of an area; aviation meteorology, researching weather information for aviation; atmospheric chemistry, examining the chemical composition and processes in the atmosphere; atmospheric optics, analyzing the optical phenomena of the atmosphere such as halos or rainbows; or agricultural meteorology, studying the relationship between weather and vegetation.

In his book Meteorologica, written c. 340 B.C., Greek philosopher and scientist Aristotle (384–322 B.C. ) was the first to record the use of the term meteorology. Aristotle's work summarized the knowledge of the day concerning atmospheric phenomena. He speculatively wrote about clouds, rain, snow, wind, and climatic changes, and although many of his findings later proved to be incorrect, many of them were insightful.

The fourteenth-century invention of weather measuring instruments made scientific study of atmospheric phenomena possible, but it was the seventeenth century inventions of the thermometer, barometer (a device used to measure atmospheric pressure), and anemometer (a device used for measuring wind speed) that laid the foundation for modern meteorological observation. In 1802, the first cloud classification system was formulated, and in 1805, a wind scale was first introduced. These measuring instruments and new ideas made possible the gathering of actual data from the atmosphere that, in turn, provided the basis for the advancement of scientific theories involving atmospheric structure, properties (pressure, temperature, humidity, etc.), and governing physical laws.

In the early 1840s, the first weather forecasting services started with ability to transmit observational data via telegraph. At that time, meteorology was still in the descriptive phase, still on an empirical basis with few scientific theories.

Meteorological science was spurred by World War I military demands. Norwegian physicist Vilhelm Bjerknes (1862–1951) introduced a modern meteorological theory stating that weather patterns in the temperate middle latitudes are the results of the interaction between warm and cold air masses. His description of atmospheric phenomena and forecasting techniques were based on the laws of physics and provided a template for modern dynamic meteorological modeling. By assuming a given set of atmospheric conditions to which were applied governing physical laws, meteorologists could make predictions about future weather and climatic conditions.

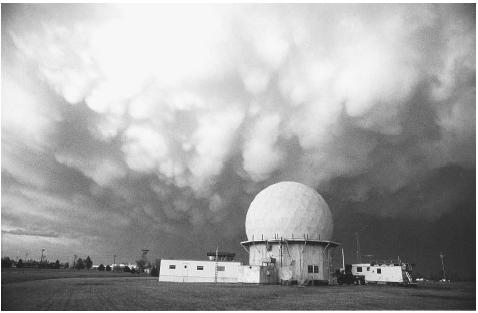

By the 1940s, upper-level measurements of pressure, temperature, wind, and humidity provided detailed insight into the vertical properties of the atmosphere. In the 1940s, Englishman R. C. Sutcliffe and Swede S. Peterssen developed three-dimensional analysis and forecasting methods. American military pilots flying above the Pacific during World War II discovered a strong stream of air rapidly flowing from west to east, which became known as the jet stream—an important factor in the movement of air masses. Weather radar first came into use in the United

States in 1949 with the efforts of Horace Byers (1906–1998) and R. R. Braham. Conventional weather radar shows precipitation location and intensity. Ultimately, the development of radar, rockets, and satellites greatly improved data collection and weather forecasting.

In 1946, the process of cloud seeding made possible early weather modification experiments. In the 1950s, radar became important for detecting precipitation of a remote area. Also in the 1950s, with the invention of the computer, weather forecasting became not only quicker but also more reliable, because the computers could more rapidly solve the mathematical equations of atmospheric models. In 1960, the first meteorological satellite was launched.

Satellites now give three-dimensional data to high-speed computers for faster and more precise weather predictions. Modern computers are capable of plotting observational data, and performing both short term and long term modeling analysis ranging from next day weather forecasting to decades long climatic models. Even so, the computers still have their capacity limits, the models still have many uncertainties, and the effects of the atmosphere on our complex society and environment can be serious. Many complicated issues remain at the forefront of meteorology, including air pollution, global warming, El Niño events, climate change, ozone hole or acid rain, making meteorology a scientific area still fraught with challenges and unanswered questions.

Weather forecasting. Weather forecasting is the attempt by meteorologists to predict the state of the atmosphere at a near future time and the weather conditions that may be expected. Many military planners consider weather to be a "force multiplier" (i.e., forces prepared to operate effectively in adverse conditions can fare substantially better than unprepared forces). Accurate weather forecasts are especially critical to the tactical operation of aviation and naval forces.

In the United States, weather forecasting is the responsibility of the National Weather Service (NWS), a division of the National Oceanic and Atmospheric Administration (NOAA) of the Department of Commerce. NWS maintains more than 400 field offices and observatories in all 50 states and overseas. The future modernized structure of the NWS will include 116 weather forecast offices (WFO) and 13 river forecast centers, all collocated with WFOs. WFOs also collect data from ships at sea all over the world and from meteorological satellites. Each year the NWS collects nearly four million pieces of information about atmospheric conditions from these sources.

The information collected by WFOs is used in the weather forecasting work of NWS. The data is processed by nine National Centers for Environmental Prediction (NCEP). Each center has a specific weather-related responsibility: seven of the centers focus on weather prediction—the Aviation Weather Center, the Climate Prediction Center, the Hydrometeorological Prediction Center, the Marine Prediction Center, the Space Environment Center, the Storm Prediction Center, and the Tropical Prediction Center. The other two centers—the Environmental Prediction Center and NCEP Central Operations—develop and run complex computer models of the atmosphere and provide support to the other centers. Severe weather systems such as thunderstorms, tornadoes, and hurricanes are monitored at the National Storm Prediction Center in Norman, Oklahoma, and the National Hurricane Center in Miami, Florida. Hurricane watches and warnings are issued by the National Hurricane Center's Tropical Prediction Center in Miami serving the Atlantic, Caribbean, Gulf of Mexico, and eastern Pacific Ocean) and by the Forecast Office in Honolulu (serving the central Pacific). WFOs, other government agencies, and private meteorological services rely on NCEP's information, and many of the weather forecasts in the paper and on radio and television originate at NCEP.

Global weather data are collected at more than 1,000 observation points around the world and then sent to central stations maintained by the World Meteorological Organization, a division of the United Nations. Global data also is sent to NWS's NCEPs for analysis and publication.

According to steady-state or trend models, weather conditions are strongly influenced by the movement of air masses that often can be charted quite accurately. A weather map might show that a cold front is moving across the great plains of the United States from west to east with an average speed of 10 mph (16 kph). It might be reasonable to predict that the front would reach a place 100 mi (1,609 km) to the east in a matter of 10 hours. Since characteristic types of weather often are associated with cold fronts it then might be reasonable to predict the weather at locations east of the front with some degree of confidence.

A similar approach to forecasting is called the analogue method because it uses analogies between existing weather maps and similar maps from the past. For example, suppose a weather map for December 10, 2003, is found to be almost identical with a weather map for January 8, 1996. Because the weather for the earlier date is already known it might be reasonable to predict similar weather patterns for the later date.

Another form of weather forecasting makes use of statistical probability. In some locations on Earth's surface, one can safely predict the weather because a consistent pattern has already been established. In parts of Peru, it rains no more than a few inches per century. A weather forecaster in this region might feel confident that he or she could predict clear skies for tomorrow with a 99.9% chance of being correct.

The complexity of atmospheric conditions is reflected in the fact that none of the forecasting methods outlined above is dependable for more than a few days, at best. This reality does not prevent meteorologists from attempting to make long-term forecasts, but accuracy declines as the forecast interval increases.

The basis for long-range forecasting is a statistical analysis of weather conditions over an area in the past. For example, a forecaster might determine that the average snow fall in December in Grand Rapids, Michigan, over the past 30 years had been 15.8 in (40.1 cm). A reasonable way to try estimating next year's snowfall in Grand Rapids would be to assume that it might be close to 15.8 inches (40.1 cm). This kind of statistical data is augmented by studies of global conditions such as winds in the upper atmosphere and ocean temperatures. If a forecaster knows that the jet stream over Canada has been diverted southward from its normal flow for a period of months, that change might alter precipitation patterns over Grand Rapids over the next few months.

The term numerical weather prediction is something of a misnomer because all forms of forecasting make use of numerical data such as temperature, atmospheric pressure, and humidity. More precisely, numerical weather prediction refers to forecasts that are obtained by using complex mathematical calculations carried out with high-speed computers.

Numerical weather prediction is based on mathematical models of the atmosphere. A mathematical model is a system of equations that attempts to describe the properties of the atmosphere and changes that may take place within it. These equations can be written because the gases that comprise the atmosphere obey the same physical and chemical laws that gases on earth's surface follow. For example, Charles' Law says that when a gas is heated it tends to expand. This law applies to gases in the atmosphere as it does to gases in a laboratory.

The technical problem that meteorologists face is that atmospheric gases are influenced by many different physical and chemical factors at the same time. A gas that expands according to Charles' Law may also be decomposing because of chemical forces acting on it. Meteorologists select a group of equations that describe the conditions of the atmosphere as completely as possible for any one location at any one time. This set of equations can never be complete because even a computer is limited as to the number of calculations it can complete in a reasonable time. Thus, meteorologists must select the factors they predict will be the most important in influencing the development of atmospheric conditions.

The accuracy of numerical weather predictions depends primarily on two factors. First, the more data that is available to a computer the more accurate its results. Second, the faster the speed of the computer the more calculations it can perform and the more accurate its report will be. In the period from 1955 (when computers were first used in weather forecasting) to the current time, the percent skill of forecasts has improved from about 30 percent to more than 60 percent. The percent skill measure was invented to describe the likelihood that a weather forecast will be better than pure chance.

Today, an accurate next-day forecast often is possible. For periods of less than a day, a forecast covering an area of 100 sq mi (259 sq km) is likely to be quite dependable.

In the 1990s, the more advanced Doppler radar, which can continuously measure wind speed in addition to precipitation location and intensity, came into wide use. Using mathematical models to automatically analyze data, calculators and computers gave meteorologists the ability to process large amounts of data and make complex calculations quickly. Today the integration of communications, remote sensing, and computer systems makes it possible to predict the weather almost simultaneously. Weather satellites, the first launched in 1960, can now produce sequence photography showing cloud and frontal movements, water-vapor concentrations, and temperature changes.

In addition to directly impacting operational effectiveness of military and other security forces. Emergency planners rely on accurate forecast maps to predict the dissemination patterns of nuclear, chemical, and biological materials.

Cloud seeding. Starting in the 1940s, researchers experimented with modifying precipitation patterns.

After about three years of investigative work at the General Electric Research Laboratory in Schenectady, New York, researchers Irving Langmuir and his assistant, Vincent Joseph Schaefer, created the first human-made rainfall. Their work had originated as war-influenced research on airplane wing icing. On November 13, 1946, Schaefer sprinkled several pounds of dry ice (frozen carbon dioxide) from an airplane into a supercooled cloud, a cloud in which the water droplets remain liquid in sub-zero temperatures. He then flew under the cloud to experience a self-induced snowfall. The snow changed to rain by the time it reached Langmuir, who was observing the experiment on the ground.

Langmuir and Schaefer selected dry ice as cloud "seed" for its quick cooling ability. As the dry ice travels through the cloud, the water vapor behind it condenses into rain-producing crystals. As the crystals gain weight, they begin to fall and grow larger as they collide with other droplets.

Another General Electric scientist who had worked with Langmuir and Schaefer, Bernard Vonnegut, developed a different cloud-seeding strategy. The formation of water droplets requires microscopic nuclei. Under natural conditions, these nuclei can consist of dust, smoke, or sea salt particles. Instead of using dry ice as a catalyst, Vonnegut decided to use substitute nuclei around which the water droplets in the cloud could condense. He chose silver iodide as this substitute because the shape of its crystals resembled the shape of the ice crystals he was attempting to create.

The silver iodide was not only successful, it had practical advantages over dry ice. It could be distributed from the ground through the use of cannons, smoke generators, and natural cumulonimbus cloud updrafts. Also, it could be stored indefinitely at room temperature.

A nucleation event is the process of condensation or aggregation (gathering) that results in the formation of larger drops or crystals around a material that acts as a structural nucleus around which such condensation or aggregation proceeds. Moreover, the introduction of such structural nuclei can often induce the processes of condensation or crystal growth. Accordingly, nucleation is one of the ways that a phase transition can take place in a material.

In addition to their importance in explaining a wide variety of geophysical and geochemical phenomena—including crystal formation—the principles of nucleation were used in cloud seeding weather modification experiments where nuclei of inert materials were dispersed into clouds with the hopes of inducing condensation and rainfall.

During a phase transition, a material changes from one form to another. For example, ice melts to form liquid water, or a liquid boils to form a gas. Phase transitions occur due to changes in temperature. Certain transitions occur smoothly throughout the whole material, while others happen suddenly at different points in the material. When the transitions occur suddenly, a bubble forms at the point where the transition began, with the new phase inside the bubble and the old phase outside. The bubble expands, converting more and more of the material into the new phase. The creation of a bubble is called a nucleation event.

Phase transitions are grouped into two categories, known as first order transitions and second order transitions. Nucleation events happen in first order transitions. In this kind of transition, there is an obstacle to the transition occurring smoothly. A prime example is condensation of water vapor to form liquid water. Condensation requires that many water molecules collide and stick together almost simultaneously. This requirement for simultaneous collisions presents a temporary but measurable barrier to the formation of a bubble of liquid phase. Following formation, the bubble expands as more water molecules strike the surface of the bubble and are absorbed into the liquid phase. Because of the obstacle to the phase transition, a liquid may exist in its gaseous state even though the temperature is well below the boiling point.

A liquid in this state is said to be supercooled. Accordingly, in order for a liquid to be supercooled, it must be pure, because dust or other impurities act as nucleation centers. If the liquid is very pure, however, it may remain supercooled for a long time. A supercooled state is termed metastable due to its relatively long lifetime.

The other type of phase transition is called second order, and it proceeds simultaneously throughout the whole material. An example of a second order transition is the melting of a solid. As the temperature rises, the magnitude of the thermal vibrations of molecules causes the solid to break apart into a liquid form. As long as the solid is in thermal equilibrium and the melting occurs slowly, the transition takes place at the same time everywhere in the solid, rather than taking place through nucleation events at isolated points.

There is general disagreement over the success and practicality of cloud seeding. Opponents of cloud seeding contend that there is no real proof that the precipitation experienced by the seeders is actually of their own making. Proponents, on the other hand, declare that the effect of seeding may be more than local.

Regardless, until the 1990s, when efforts were generally abandoned for lack of scientific proof of their effectiveness, cloud seeding was an accepted part of the strategy to combat drought. During the Vietnam War, the U.S. attempted to deny use of roads and trails to the North Vietnamese by seeding clouds and inducing localized rainfall. The effectiveness of those attempts remains questionable.

█ FURTHER READING:

BOOKS:

Hamblin, W. K., and E. H. Christiansen. Earth's Dynamic Systems, 9th ed. Upper Saddle River, NJ: Prentice Hall, 2001.

Hancock, P. L., and B. J. Skinner, eds. The Oxford Companion to the Earth. New York: Oxford University Press, 2000.

Lutgens, Frederick, K., et al. The Atmosphere: A Introduction to Meteorology, 8th ed. Upper Saddle River, NJ: Prentice Hall, 2001.

ELECTRONIC:

National Weather Service. "Internet Weather Source." < http://weather.noaa.gov/ > (March 29, 2003).

National Oceanic and Atmospheric Administration. < http://www.noaa.gov/ > (March 29, 2003).

SEE ALSO

FEMA (United States Federal Emergency Management Agency)

NOAA (National Oceanic & Atmospheric Administration)

Comment about this article, ask questions, or add new information about this topic: